The End of the AI Buffet

Behind Anthropic's crackdown on third-party tools lies a much bigger shift: the era of subsidised compute is over

On the morning of April 5th 2026, developers across the world opened their terminals to find their autonomous coding agents had gone silent. The day before, Anthropic had enforced a rule that thousands had been bending for months: you cannot pipe your Claude subscription through third-party tools like OpenClaw. If you want to run autonomous agents, use the API and pay by the token. The backlash was immediate: walled garden, bait-and-switch, the party is over. The creator of OpenClaw, already hired by OpenAI after Anthropic hit the project with a cease-and-desist weeks earlier, made the whole episode feel very pointed.

A lot of the commentary has focused on whether the restriction was fair - and predictably, opinions split along lines of self-interest. Others have tried to dig into the strategic logic, which is more interesting, but often miss either the nuance or the bigger picture. One important detail that gets lost: this wasn’t even a new policy. It was a restatement of terms that already existed, enforced via a technical block that closed an unofficial OAuth workaround. So the more revealing question isn’t whether it was fair - it’s what this tells us about where AI companies are heading, and what that means for anyone building with or on top of AI.

Anthropic generates more revenue than OpenAI with a fraction of the users - let’s go through why by analysing Anthropic’s playbook.

TL;DR: It’s just capitalism, silly: First you subsidise, then you capture market share, then you extract $.

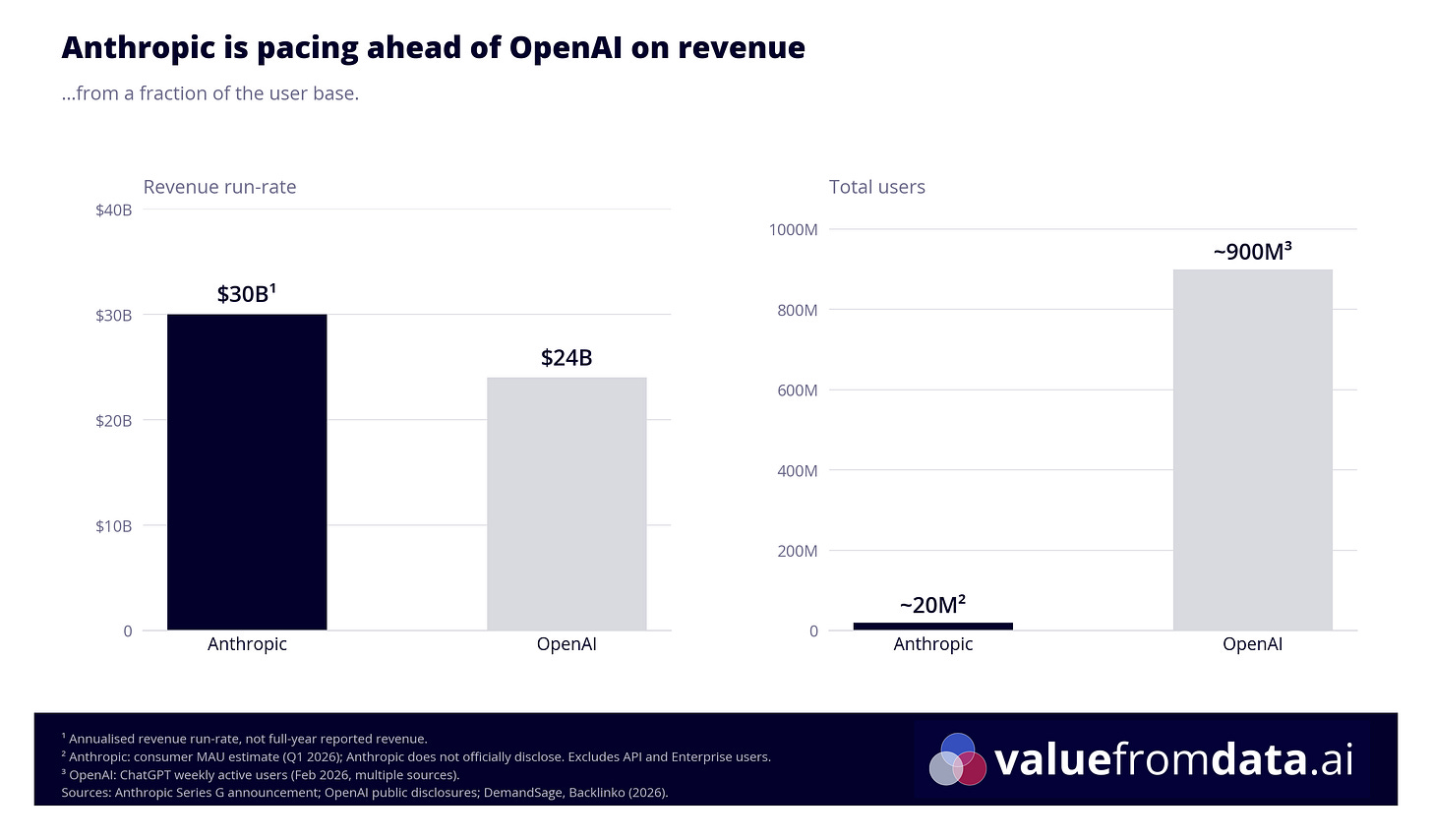

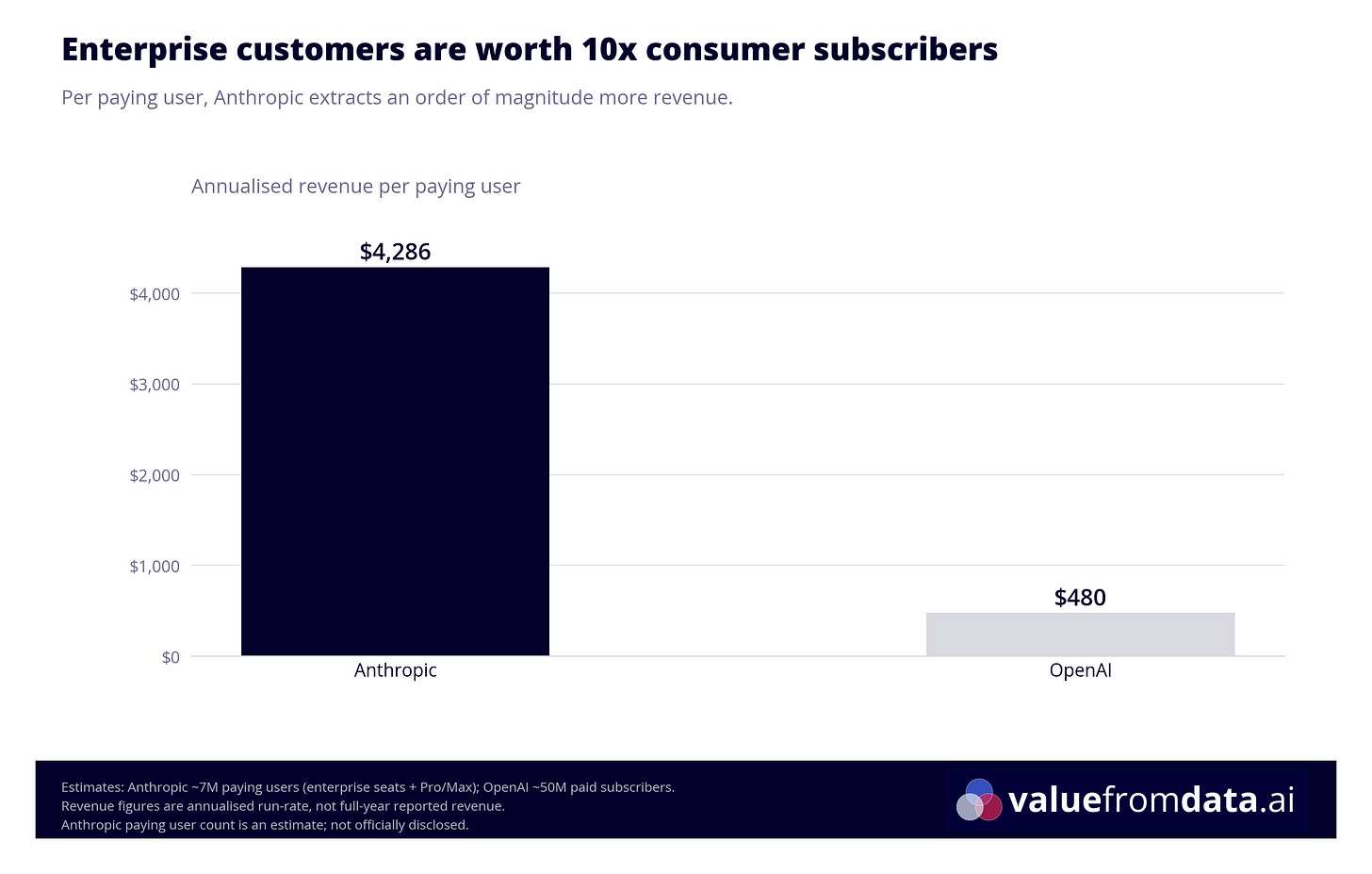

“Anthropic is generating more revenue - with roughly 5% of OpenAI’s user base.”

Slightly longer TL;DR:

The models are commoditised; the real business is the platform & application layers around the LLMs.

Anthropic generates more revenue than OpenAI with a fraction of the users, by going after Enterprise deals.

The OpenClaw ban, Claude Design, Managed Agents, the marketplace are all multiple moves towards one play: For Anthropic to become where work happens, and build a moat around it.

The pattern is old: subsidise to capture, then extract once you’re indispensable. See: Amazon, Uber, Facebook (to name modern examples - the practice wasn’t invented by Silicon Valley)

Lock-in isn’t uniform. Claude Code is mostly portable; Claude for Enterprise isn’t. You should know which you’re buying.

So, strap in. Today’s article is a long one (and one I’ve begrudgingly been rewriting for the past few weeks as new news kept coming in...). We’ll cover what Anthropic actually sells, why the economics of the subscription broke, why models are a weak moat, the platform play Anthropic is running instead, and what it all means for individual power users, enterprise buyers, and anyone building products on top of LLMs.

Let’s get to it.

What Anthropic actually sells

Before we get into the strategy, it’s worth being precise about the products, because even I’ve seen lots of people get this wrong (even AI developers and strategists), in ways that lead to some genuinely confused takes.

Anthropic sells access to Claude in two fundamentally different ways:

The API is pay-as-you-go. You send tokens, you pay per token. It’s aimed at developers and enterprises building products on top of Claude. No limits other than your budget.

The subscription plans (Pro at $20/month, Max at $200/month) give you a monthly allowance - a bit like a phone plan. You get access to Claude on the web, Claude Code (the AI coding tool), and Claude Co-Work (the business collaboration tool). Hit your limit, and you either wait for it to reset or top up with extra usage.

The key distinction: the subscription gives you access to Anthropic’s applications, not just the raw model. In the early days, the chat interface was probably not much more than a thin wrapper around the API. Today, Claude on the web, Claude Code, and Co-Work are substantially different products - with custom prompts, safety layers, built-in connectors to things like Google Drive, and features that don’t exist at the API level at all.

Think of it like a car1. The model - whether it’s the powerful Opus, the versatile Sonnet, or the nimble Haiku - is the engine. You can buy that engine in different sizes: your 1.6 litre for everyday driving, your 2.4 for more serious work, your turbocharged option for when you need everything it’s got. The API is buying the engine directly. The subscription plans are buying the whole car - engine, body, GPS, heated seats, the lot. Different cars for different missions: Claude on the web is the family SUV, Claude Code is the pickup truck, Co-Work is the company fleet vehicle.

The April 4th change only affected subscription users. If you’re on the API, nothing changed. This distinction is easy to miss - I’ve seen it glossed over not just in Reddit threads but in pieces by people who write about AI strategy professionally. It’s load-bearing, so it’s worth keeping clear throughout this piece: the subscription and the API are fundamentally different products, and conflating them produces a fundamentally different read of what happened.

“It’s as if a single buffet diner started taking boxes home for their entire extended family”

The economics of subsidisation

Here’s the thing about subscription plans: they’re not priced at cost. They’re priced to attract users. To understand why this matters, it helps to think in three levels.

Level one: what it costs Anthropic to serve a token. This is the production cost - the compute, the electricity, the amortised cost of training. Plus all the development cost to build the products that serve tokens (Claude.ai, Claude Cowork, Claude Code etc.) and other overheads. Anthropic doesn’t publish this, but it’s the baseline that everything else has to cover.

Level two: what Anthropic charges on the API. At standard rates, Claude 3.5 Sonnet costs $3.00 per million input tokens and $15.00 per million output tokens. This is the “menu price” - what you pay if you buy the raw ingredient.

Level three: what the subscription costs. This is the meal deal. Pret A Manger charges you £8.50 for a sandwich, drink and snack separately. The meal deal gets you all three for £6.50. The discount is real but modest. Now imagine the meal deal was £1. That’s roughly the ratio between API rates and subscription pricing for a heavy power user - except it’s not quite right either, because even the £8.50 Pret price isn’t the cost of the ingredients to Pret. There’s a margin there. So you have three distinct prices: what it costs to make the thing, what you pay for it in pieces, and what you pay for the bundle. In AI, those three numbers diverge dramatically.

How dramatically? Developer benchmarks suggest that heavy users on the $200/month Max plan are paying somewhere between 18 and 135 times less than equivalent API usage would cost. One widely-shared figure making the rounds on Reddit was $27,000 of API-equivalent compute in a single billing cycle on a $200 plan. After digging, I couldn’t find a trustworthy primary source for that number - $5,000 per month is a more defensible upper bound based on published developer benchmarks.

The subscription model works - as long as it’s being used at human speed. The distribution of users is shaped like an insurance pool: most subscribers consume well below their theoretical limit, and that slack subsidises the heavy users. It’s the same logic as an all-you-can-eat buffet. It breaks when someone shows up with a van.

Autonomous AI agents don’t type. They don’t take lunch breaks. A developer running an agent in a continuous loop through their subscription wasn’t stretching the model - they were consuming $1,000 to $5,000 of compute per day. The buffet analogy doesn’t quite capture it: it’s as if a single diner started taking boxes home for their entire extended family.

There’s also a technical dimension that makes this more expensive than it sounds. Anthropic’s first-party tools are engineered to maximise prompt cache hit rates. When Claude Code sends the same codebase context back to the model repeatedly, it caches that context rather than reprocessing it from scratch. The difference: a cache hit costs $0.30 per million tokens versus $3.75 for a cold read - a 92% cost reduction. Third-party tools using unofficial OAuth workarounds often bypassed this caching entirely. Every request was a cold start.

So the restriction wasn’t just about who gets to use the subscription. It was about closing an exploit that was significantly more expensive to serve than the same usage through Anthropic’s own tools.

But it’s not just about infrastructure

If this were purely a capacity issue, Anthropic could have imposed stricter rate limits and called it a day. They didn’t. Instead, four days after blocking third-party harnesses, they launched Claude Managed Agents - their own infrastructure for running autonomous AI workflows, offering sandboxed execution, state management, and multi-agent coordination.

Whether Claude Managed Agents is a direct substitute for OpenClaw is debatable. OpenClaw ran on your local machine and routed through your subscription credentials; Managed Agents is cloud-hosted infrastructure you access via the API. They’re in the same category - both designed for autonomous agent workflows - but they’re architecturally different products. What’s not debatable is the timing.

The timing tells you everything.

This is the real story: Anthropic isn’t just protecting its margins. It’s building a platform. And to understand why that’s the right strategic move, you first need to understand why the model alone can’t sustain a business.

Why models are a weak moat

When OpenAI released GPT-4, it looked like a genuine breakthrough - the kind of lead that might last years. Within months, Claude 3.5 Sonnet and Google’s Gemini matched or exceeded it on most benchmarks. Open-source models like Meta’s Llama 3 and Google’s Gemma closed the gap further. Today, model capabilities converge fast. Shared talent pools, reverse engineering, the same training data floating around the internet, and - let’s be honest - some more questionable practices, like reports of Chinese labs training models cheaply by firing millions of queries at Claude.

The open-source pressure adds a dimension that’s easy to underestimate. It’s not just that the paid models are catching up with each other - it’s that the free models are getting close enough to “good enough” for a growing range of use cases. If Llama 3 handles 80% of your customer service queries at zero per-token cost, the question of whether Claude Opus does it 15% better starts to feel less compelling.

This isn’t paranoia; it’s just good business. Every enterprise LLM project I’ve been involved with has treated model portability as an explicit design requirement, not an afterthought. Solution architects sit down at the start of a project and map out the single-vendor risks - what happens if the provider deprecates a model, puts prices up, gets acquired, or gets compromised - and then design around them. Retrieval layers, prompt templates, evaluation harnesses, and tool integrations all sit behind an abstraction that lets you swap the underlying model without rewriting the stack. When a critical component has only one supplier, mature engineering organisations default to building an exit route. LLMs are no different.

The foundation labs have essentially facilitated this themselves. When GPT-3.5 was deprecated, companies that had hardcoded the model name suddenly had migration work on their hands. The industry’s response was to build routing and abstraction layers that make switching vendors roughly as easy as switching model versions - at least from an infrastructure standpoint. System prompts and tool integrations still need updating, but that’s manageable work, not a migration.

“Either way, the era of infinite subsidised compute is over. Plan accordingly”

Then there’s the sovereignty dimension. A significant and growing motivation for enterprises - particularly in Europe - to self-host or choose European providers is neither cost nor capability. It’s privacy and reduced dependence on US infrastructure. The CLOUD Act, passed in 2018, gives US law enforcement the ability to compel US-headquartered cloud providers to hand over data stored anywhere, including EU data centres. AWS’s “European Sovereign Cloud” has already attracted legal questions about whether its separate corporate structure is sufficient to escape this jurisdiction. For regulated institutions and European public bodies, that’s not a theoretical risk - and it’s driving serious deal flow to providers like Mistral, which has landed significant enterprise partnerships with HSBC, CMA CGM, and French and German government bodies building sovereign AI stacks outside US cloud infrastructure2.

Intercom is the poster child for the commercial case. The customer service platform initially built its AI features on OpenAI’s APIs. When costs got too high, they switched to Anthropic - migrating their Fin AI agent from GPT to Claude in 2024. Then, as volumes scaled, they went further: their newer Fin Apex model is a post-trained domain-specific model Intercom runs themselves, which their Chief AI Officer has said saves the company around $250,000 per month at roughly one-fifth the cost of calling frontier models directly, while outperforming them on customer-service benchmarks.

This isn’t just a tech company story. Bloomberg went the furthest and trained BloombergGPT - a 50-billion-parameter model built from scratch on four decades of proprietary financial data, now embedded in the Terminal. JPMorgan took the more common path: it banned ChatGPT for employees in 2023 citing data-control concerns, then built its in-house LLM Suite - a portal that routes to commercial models through its own controlled infrastructure - for 60,000+ staff. The pattern is consistent: once you’ve proved the value of LLM-powered features using expensive commercial APIs, the natural next question is whether you could run something cheaper or more controlled yourself.

One counterpoint worth acknowledging: multi-year agreements with spend commitments do create a degree of lock-in. Over 1,000 enterprise customers now spend more than $1 million annually on Claude, and many access it through cloud-channel agreements (like AWS’s Enterprise Discount Programme) that involve committed spend. But enterprises who understand that models are a commodity tend to negotiate those commitments carefully - and the fact that switching is structurally easy keeps the leverage somewhat balanced. The lock-in through contract terms is real but partial.

The upshot: selling model access is a commodity business, getting more commoditised by the month. If Anthropic’s entire revenue came from API tokens, they’d be in a permanent price war against OpenAI, Google, and every open-source project with a GPU cluster.

The application layer play

So Anthropic is doing what every smart infrastructure company eventually does: moving up the stack.

But framing this as just a platform play - moving up the stack, building lock-in - misses what makes it different from every platform play that came before. Previous platforms gave you a suite of tools under one roof - Google Workspace gave you Docs, Sheets, and Slides, but nobody thinks of their Google homepage as the place where work happens. It’s a launcher. Even Notion, which came closest to the “everything tool” ideal, became a coordination layer for companies that adopted it fully - not a replacement for the surfaces where the real work gets done. Developers still wrote code in an IDE, designers still designed in Figma, analysts still built dashboards in Tableau. Notion tracked and organised the work. It didn’t do the work.

What Anthropic is attempting is different in kind: a single conversational interface where the work itself happens. Claude Code has developers writing software through conversation. Claude Design, launched last week, extends the same idea to visual work - prototypes, slides, interfaces - and sent Figma’s stock down 5% on the day. Co-Work does it for business collaboration. The surface keeps expanding, and the unifying thread isn’t a shared file system or a single login - it’s one AI that knows your context across everything you do.

It’s easy to see the Claude “spinoffs” like Claude on the web, Claude Code, Co-Work, the new marketplace, Claude Managed Agents as just “features of Claude”, but really they’re something much more noteworthy: The beginnings of an ecosystem designed to be hard to leave.

Different Anthropic products create very different degrees of lock-in.

Claude for Enterprise - shared projects, conversation history, company connectors, SSO integration, role-based access control, audit logging - creates deep lock-in. Your organisation’s context, permissions, and workflows live inside Anthropic’s application. Migrating that to a competitor is an ERP-scale headache.

Claude Code, by contrast, creates almost none. Your codebase lives in your repo. Your CLAUDE.md files, your architectural guidelines, your custom commands - all portable markdown. If a better coding tool shows up tomorrow, you can switch with minimal friction.

Claude Code does create something else, though: the kind of product that developers actually want. That matters in organisations. When a tool becomes something your users love rather than something they’re instructed to use, it earns a kind of stickiness that procurement decisions can erode but not easily eliminate.

That said, enterprise cost optimisation has a long history of overriding user preference. The migration from Tableau to Power BI started happening long before Power BI was the better product - cost was the driver. The same pattern played out with Zoom to Google Meet, and Slack to Microsoft Teams, especially among traditional enterprises with large IT footprints. Saving 20% on software spend often wins over 20% better quality of output. Anthropic is betting that Claude Code’s developer affection can hold against that pressure. It’s a real bet.

Then there’s the marketplace. Launched in March 2026 with six vetted partners (GitLab, Snowflake, Harvey, Replit, Rogo, Lovable Labs), it operates on a zero-commission model with consolidated billing. Enterprise customers can apply their existing Claude compute commitments toward partner subscriptions. Anthropic handles the master service agreement, the compliance vetting, the invoicing.

This playbook has deep historical roots - it predates Amazon by a century. But the mechanics are worth spelling out for anyone who hasn’t watched it play out, because it tends to move slowly and then all at once. You attract partners with generous terms (zero commission, easy onboarding). You control the distribution channel and gain visibility into which integrations generate the most value. The generous terms gradually tighten once you’re indispensable. And the most successful third-party capabilities tend to get absorbed into the first party. Facebook did this with its developer platform - opening it generously to app developers in 2007, then progressively restricting Graph API access, introducing pay-to-play for Pages reach, and replicating the most successful third-party features (Stories, check-ins, events) natively. Social reader apps built on the platform were effectively killed when Facebook changed the feed algorithm without warning. The Amazon Basics story (covered in the next section) is another version. Carnegie Steel did it with vertical integration over a century ago. It keeps working because the asymmetry of information and distribution keeps favouring the platform.

Anthropic has already demonstrated willingness to act on this pattern. The OpenClaw restriction is the proof: a popular third-party tool emerged, Anthropic launched its own competing product, the third-party tool lost access to subsidised compute. Whether the marketplace follows the same arc remains to be seen - but the conditions are all present.

And then there’s Routines, released in April 2026 as a research preview: saved Claude Code configurations - a prompt, a repo, a set of connectors - that run automatically on Anthropic-managed cloud infrastructure, triggered by a schedule, an HTTP endpoint, or a GitHub event. The nightly PR review you set up on Monday keeps running whether your laptop is open or not. It’s a useful product, and it’s also another layer of lock-in that used to live somewhere else. The cron job on your own server, the GitHub Action in your own CI, the webhook on your own webhook receiver - those are all things you own and can point at any model. A routine is a workflow that lives inside Anthropic’s infrastructure, scoped to your Anthropic account, and running on Anthropic’s scheduler. Migrating it isn’t rewriting a prompt; it’s rebuilding the plumbing you no longer owned.

I want to pause here and call out something else: this isn’t only good for Anthropic and their growth prospects, it’s exciting for end-users and the future of work too. A single surface where real work gets done can be transformational for how we work (and how much it sucks or doesn’t suck). It’s what’s made Claude Code so compelling for me and my ADHD brain - but that’s a story for another article.

It’s not just about creating a moat

Most companies wouldn’t lose sleep over nudging consumers away from an unsafe third-party product they don’t own. Anthropic’s position is different - their entire brand is built on being the safety-first AI lab, which is precisely why a significant proportion of enterprises trust them specifically. “We can’t guarantee safety in environments we don’t control” is a statement that lands very differently from Anthropic than it would from OpenAI or Google. It’s consistent with their identity in a way that makes it genuinely credible rather than post-hoc rationalisation.

Anthropic genuinely cares about AI safety. I realise that sounds like corporate boilerplate, but the evidence for OpenClaw specifically was real, and I’ve been vocal about it.

Two concrete concerns. First, the AI-going-haywire risk: Summer Yue, the Director of Alignment at Meta’s Superintelligence Lab, had her OpenClaw bot start deleting her entire inbox - despite weeks of testing with a dummy account and carefully refined instruction prompts. If she can’t get the guardrails right, the median user definitely can’t.

Second, the security risk: a cybersecurity researcher created the most popular skill on ClawHub (OpenClaw’s skill marketplace) and later revealed it contained a trojan that could steal SSH keys. He did it to prove the vulnerability, not to exploit it. Next time, it might not be a white hat. Even “read-only” email access means an agent can read your 2FA codes and lock you out of your accounts.

The restriction is primarily strategic and economic. But the safety motivation is real - and more credible here than it would be from anyone else in this space.

And here’s the thing often missed by cynics: values-based positioning isn’t just good PR, it’s often good business. Users are already switching out of ChatGPT and into Claude off the back of the Department of War story - not because Claude is measurably better at coding or writing, but because they don’t want their prompts feeding into defence contracts. Apple has built one of the most valuable companies on the planet on a similar proposition: a smaller, higher-margin customer base that pays a premium for privacy and control. You can see the shape of the same trade in Anthropic’s positioning. A safety-first, values-consistent brand attracts a particular kind of enterprise buyer - often a more conservative, more regulated, higher-value one - and holds onto them more reliably. The users you earn this way are also the ones least likely to leave for a 15% discount.

The 3X lens

So the platform is growing, the safety brand is credible, and the enterprise contracts are landing. The natural question: is Anthropic already in squeeze mode?

Kent Beck’s 3X framework offers a useful lens. The framework describes three phases: Explore (searching for product-market fit), Expand (scaling what works), and Extract (optimising for profit).

The temptation is to say Anthropic is transitioning from Expand to Extract - tightening the screws, ending the subsidies, locking in enterprise contracts. Some of the Reddit commentary frames it exactly this way: the party is over, the bait-and-switch is happening.

I think the reality is more nuanced. Different products are in different phases. Claude on the web is solidly in Expand - broad adoption, rapid feature iteration. Claude Code and Co-Work are arguably still in Explore - new products finding their use cases and user base. The marketplace is early Explore. None of these feel like Extract yet.

What is happening is that Anthropic is making rational Expand-phase adjustments. Cutting subsidies to unsustainable power users. Nudging developers toward first-party tools. Signing enterprise contracts while demand is hot. These look like Extract moves from the outside, but they’re actually about building the foundation for a much larger Expand phase - one centred on the enterprise, not the individual subscriber.

The historical pattern is well-established - and well worth naming, because it tends to get called a conspiracy when it’s really just a playbook. Amazon was unprofitable for nearly seven years before posting its first profitable quarter in Q4 2001. The marketplace was the bait: attract third-party sellers with generous terms and low friction, build the largest product catalogue in e-commerce, gain unparalleled visibility into what sells. The extraction came later. Congressional testimony confirmed that Amazon routinely used third-party seller data to identify high-volume products, then launched competing house-label versions under 400+ brands - including Amazon Basics. The merchants who built the platform’s volume were the data source for the products that competed with them.

This pattern is by no means exclusive to Amazon:

Facebook’s developer platform opened generously to app developers, then progressively squeezed access as Facebook built the most popular features natively. The 2015 Graph API policy changes effectively killed social reader apps (Washington Post Social Reader, Guardian Social) and viral social apps like Socialcam and Viddy that had been built entirely on Facebook’s distribution infrastructure.

Carnegie Steel, over a century ago, vertically integrated the entire steel supply chain - iron ore, ships, railroads - until competitors couldn’t access inputs at competitive prices.

The dynamics of Chinese manufacturing are a different but related version: enter a market with prices that incumbents can’t match, dominate distribution, then operate from a position of strength. Solar panels are the canonical example.

Uber did the consumer version: subsidise rides below taxi prices using venture capital to habituate consumers and crush incumbents, then raise prices once market dominance is established. In many cities, Uber now costs more than a traditional taxi.

This isn’t a conspiracy. It’s not even a criticism from a business or technology standpoint. (Okay, maybe a little - it’s at least a gripe about how the venture capital model tends to resolve. But that’s a structural observation about capitalism, not specific to Anthropic.) The pattern is: subsidise to capture, then extract once you’re indispensable. The only question is whether the timing is right - and for Anthropic, the numbers suggest it might be.

A tale of two companies

In most people’s minds, ChatGPT is synonymous with AI, and Open AI is the dominant player. And, well, for a while that was kind of the case. But things have changed a lot in the past year.

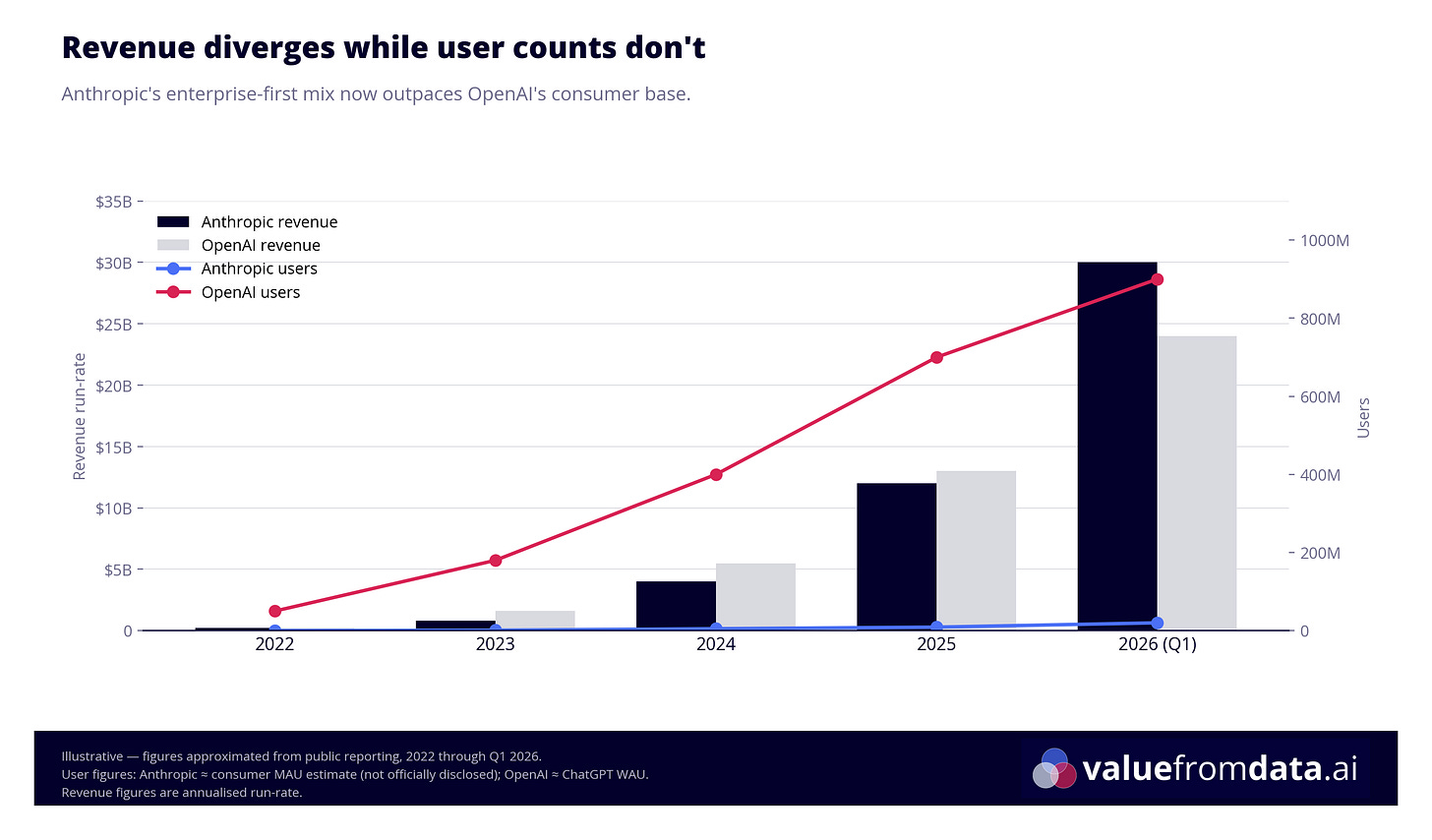

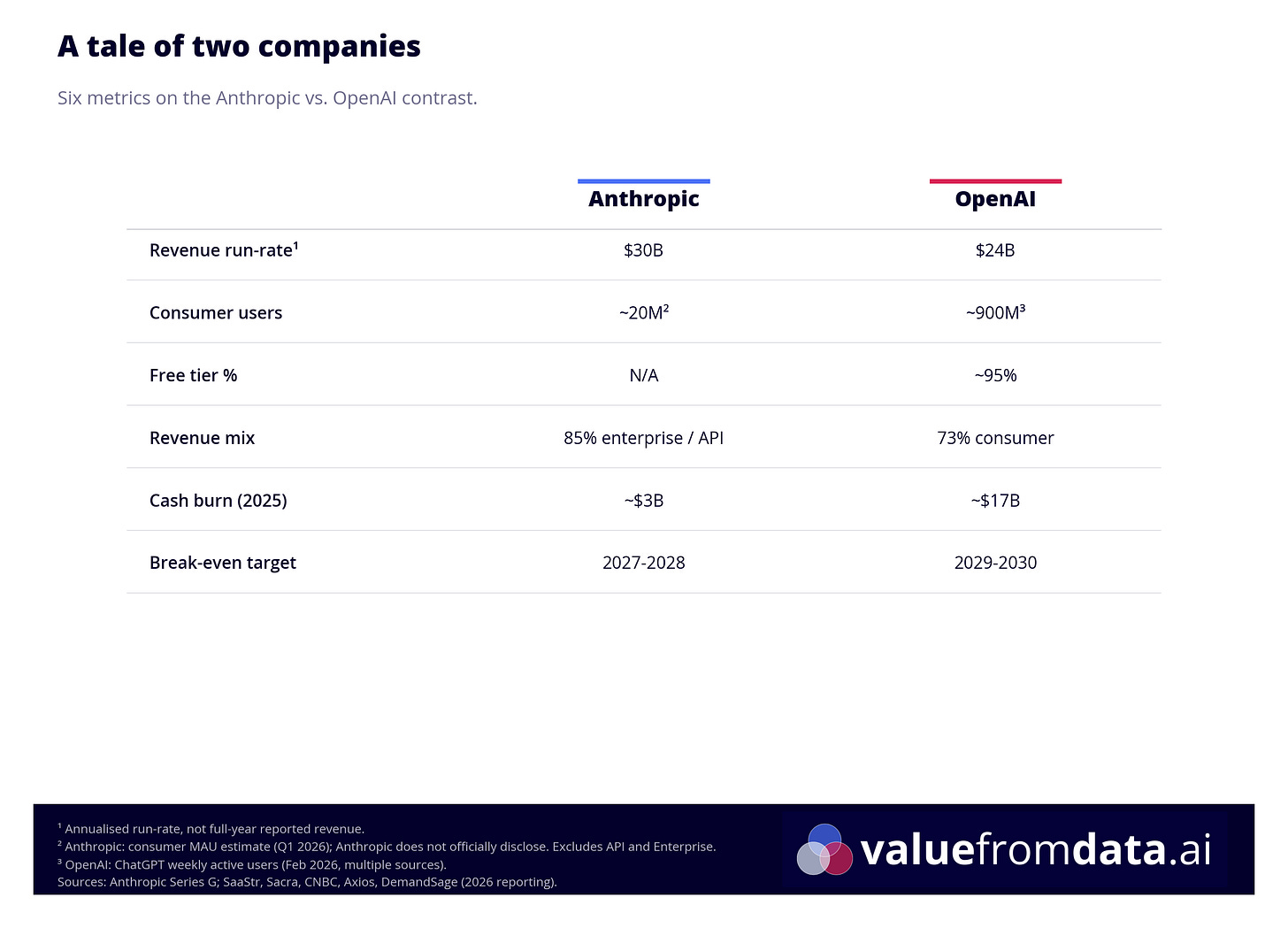

Anthropic’s annualised revenue run-rate has hit $30 billion. OpenAI’s is at $24 billion. Anthropic is generating more revenue - with a fraction of OpenAI’s user base.

Let that sink in for a moment.

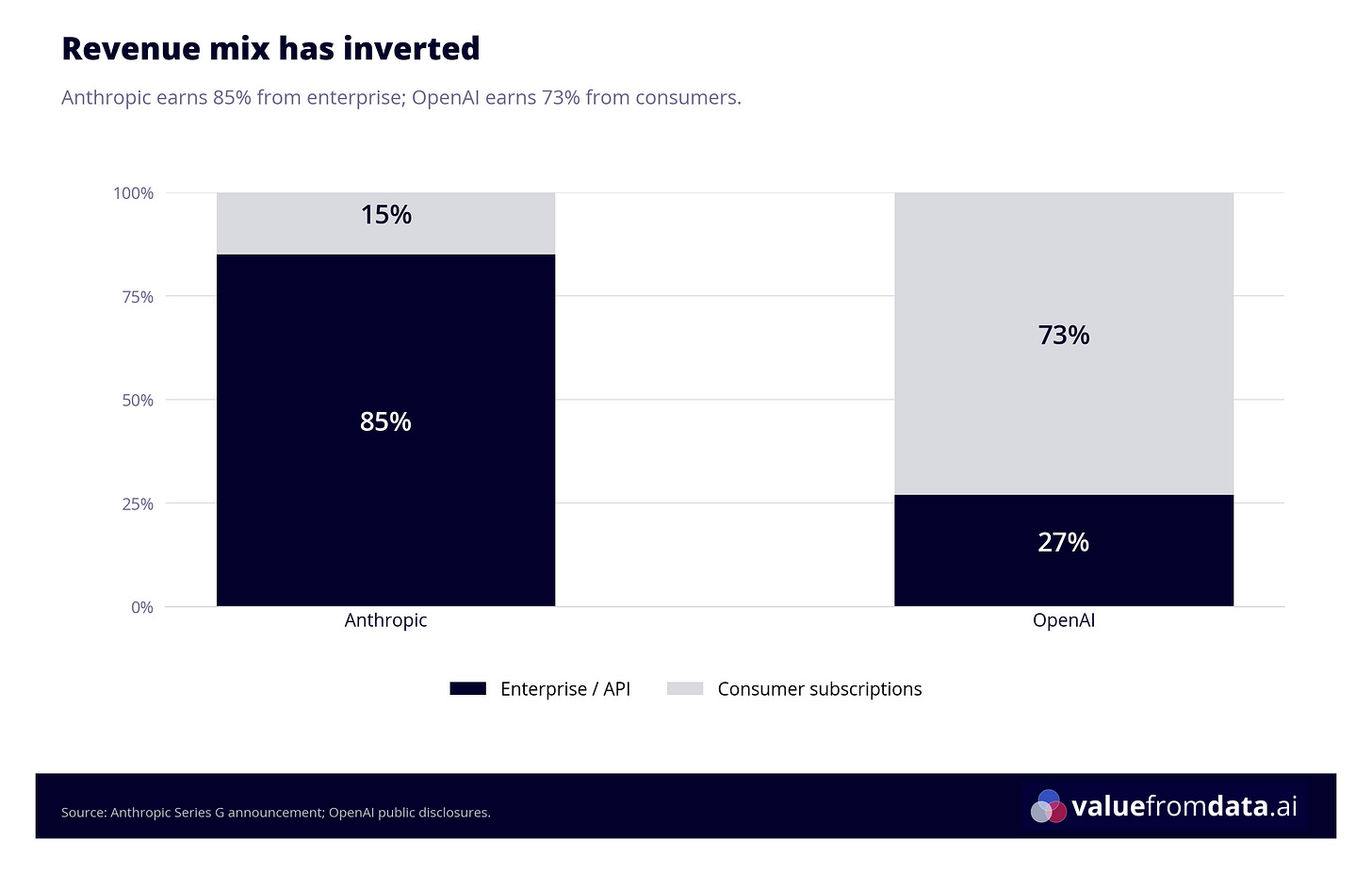

OpenAI has over 900 million weekly active users, roughly 90-95% of whom are on the free tier. Their revenue is overwhelmingly consumer-driven (73% consumer, 27% API). They’re burning approximately $17 billion in cash this year and don’t expect positive free cash flow until 2029 or later. They’ve had to introduce advertising into ChatGPT - a tacit admission that the freemium conversion funnel isn’t covering the infrastructure bill.

Anthropic’s revenue mix has completely inverted: 85% comes from API and enterprise deployments, just 15% from consumer subscriptions. Over 1,000 enterprise customers spend more than $1 million annually. Eight of the top ten Fortune 10 companies use Claude. Cash burn is around $3 billion - roughly a sixth of OpenAI’s - with break-even projected for 2027-2028.

The shift is already visible in how Anthropic prices the enterprise tier itself. Through late 2025 and into 2026, Anthropic removed bundled token allowances from enterprise seats. The $20/employee monthly fee that used to include a usage allocation now covers seat access only - every token is billed at API rates on top. It’s the same bundle-then-meter move the consumer tier has been getting, applied one rung up. For customers already burning through their bundles (in some cases, the base seat was only 20% of the total bill), the economic difference is small. For Anthropic, it converts a capacity guess into metered revenue - and makes the compute-crunch problem manageable.

The internal governance tension at OpenAI adds another layer. OpenAI’s CFO Sarah Friar - who helped secure over $120 billion in company valuation funding - reportedly had to rein in Sam Altman’s infrastructure ambitions, curtailing them from over $1 trillion to approximately $600 billion in capex by 2030. She was subsequently sidelined after floating the idea of federal financial backing for AI data centres at a WSJ panel, which the White House’s top AI official publicly shut down. The contrast in capital discipline between the two companies is stark - and explains a lot about why Anthropic can take a more methodical approach to pricing and monetisation while OpenAI is simultaneously trying to court advertisers, close Stargate, and hit 2029 profitability targets.

Granted, the comparison isn’t perfectly apples-to-apples: Anthropic’s enterprise and API customers are burning tokens at industrial scale, not uploading images of their dog to generate Studio Ghibli pictures (which, for the record, my mum has done approximately eleven times on ChatGPT’s free tier). But the direction is clear: enterprise customers are dramatically more valuable than consumer subscribers, and Anthropic has built its entire business around them3.

This is why the third-party harness restriction makes strategic sense even beyond the infrastructure argument. Every user Anthropic moves from a subsidised subscription onto the API, or better yet, into an enterprise contract with SSO and a three-year commitment, is a user generating real, sustainable revenue.

The developer relations dilemma

Not all of Anthropic’s moves are win-win. There’s a tension in their strategy that’s worth calling out.

The developers who use tools like OpenClaw are early adopters in the truest sense - not just of AI tools, but of new technology generally. They’re the people who are on every beta, who post teardowns, who give talks at local meetups. And they’re often the same people who evangelise Claude inside their organisations, push for enterprise contracts, and bring in the deals Anthropic actually wants. Alienating them carries a real cost.

This trade-off probably explains why the OpenClaw crackdown took so long in the first place. The restriction wasn’t a new policy - the terms of service already prohibited this kind of subscription-through-third-party usage. What Anthropic enforced on April 4th was an existing rule they’d tolerated for months. In commercial terms, that delay looks irrational. In relationship terms, it makes complete sense: every week they waited was a week they weren’t picking a fight with the exact community their enterprise pipeline depends on. The commercial logic of the block was obvious on day one. The developer-goodwill logic of letting it slide was just as obvious. The lag between the two is what that tension looks like in practice.

The backlash was loud. Developers accused Anthropic of building a walled garden. The DMCA takedowns after Claude Code’s source was accidentally leaked made things worse - an AI company that trained on vast amounts of internet data issuing aggressive copyright strikes felt, to many developers, deeply hypocritical.

“Subsidising hobbyists is fun in the community-building phase. It’s not a business model.”

But declared preferences and revealed preferences are different things. People have been complaining about Ryanair for thirty years and continue to fly Ryanair in numbers that suggest they’ve made peace with it. The developers who switched to Claude because it had the best models - if it still has the best models - will probably stay. The backlash was loudest in the channels where unhappy people congregate: Twitter, Reddit, Hacker News. Whether it translates into meaningful migration to OpenAI or open-source alternatives is a different question. In my limited personal experience, running meetups in Barcelona, the migration narrative is not what I observe day to day. That’s a single data point and I know it - it’s my local observation, and it would be wrong to generalise from it globally.

The most price-sensitive users who might switch are probably not the customers Anthropic was targeting anyway. Early-stage startup founders, freelancers, hobbyists - the people for whom the difference between $200 and $500 per month is meaningful. For an enterprise that is (or should be) generating returns from its AI spend, that delta barely registers. An individual tries to minimise cost; an enterprise that’s good at its job tries to maximise return on investment. Subsidising hobbyists is fun in the community-building phase. It’s not a business model.

Anthropic’s strategy is fundamentally enterprise-first, and the financial data says that’s the right call. But platforms that lose developer goodwill tend to discover the cost later, when those same developers start recommending alternatives in the rooms where procurement decisions get made. That’s not a risk that shows up on the balance sheet immediately.

Watch this space.

What does this mean for you?

The restriction on third-party harnesses is reasonable. I said as much in a LinkedIn comment when it happened: use their product, get heavily subsidised tokens; use someone else’s product, pay the market rate. Especially now that Anthropic is building OpenClaw-like functionality into Code and Co-Work, it makes sense they’d rather subsidise direct customers than folks routing through third-party tools.

As a Claude customer, I’m not unhappy about it. I’d rather Anthropic subsidise my usage than subsidise OpenClaw’s. And I think it’s genuinely good to discourage the median user from running autonomous agents with the kind of access OpenClaw requires.

But the broader pattern - subsidise to capture, then tighten as you extract - is something everyone building with AI should be thinking about. Here’s what I’d take away:

If you’re an individual power user: The subsidised era is ending, gradually then suddenly. Plan for API-rate pricing eventually, even on subscription plans. The value is real, but the pricing will catch up.

If you’re an enterprise evaluating AI tooling: The lock-in isn’t uniform, and it doesn’t break cleanly along vendor lines. It’s worth thinking through three types of lock-in separately: contract lock-in (what you’ve committed to spend and for how long), data/context lock-in (where your organisation’s history and integrations live), and model lock-in (how easy it is to swap the underlying AI model).

The table below isn’t exhaustive, but it illustrates how different this looks across the most widely-used products. The pattern that stands out: Microsoft has deliberately kept model lock-in low (multi-model strategy: GPT, Claude, and Gemini all available via Copilot) while maintaining very high contract and data lock-in through enterprise licensing and the M365 ecosystem. That’s a smart platform play - they don’t need to win on models, because the platform is already indispensable.

The most dangerous combination isn’t high model lock-in - it’s high data/context lock-in with a vendor whose pricing you can’t predict. Negotiate contracts accordingly, and pay close attention to where your company’s institutional knowledge and integrations actually live.

A different point of view I’ve seen from some folks about Anthropic worth flagging here: I’m basically describing Anthropic’s go to market strategy as the Microsoft model, but some worry Anthropic might be more of a Google. Let me explain: the Microsoft model goes more or less like this: build out an ecosystem of applications and package them in a bundle for enterprise buyers. Enterprises will prefer a single vendor that does everything over “superior” products from many vendors, as it means consolidated billing, unified security, and one vendor relationship. It’s why Teams blew Slack and Zoom out of the water despite being a worse product (especially back in ~2020 when remote working became a must).

Meanwhile the Google approach has been more of a “let’s ship tons of new products and see what sticks”. They launch with a more experimental mindset (early betas, test in the open, kill what doesn’t scale), which results in many beloved (but insufficiently popular) products being removed. KilledByGoogle.com is the famous “product graveyard” for all those products. Killing poorly-performing products makes sense; otherwise you end up stretched too thin and unable to double-down on the things that’ll actually move the needle. But it’s also a reason to be less-than-eager to migrate your workflows into something that may be deprecated 6-12 months later, and some worry Anthropic is in its “try to launch too many things” era, and thus won’t be able to support them even if they get decent adoption.

My view: Anthropic’s expansion into design, agent infrastructure, and its marketplace looks more like the Microsoft model than the Google one. Whether they have the execution discipline to sustain it is the bet each of us has to make when we choose to integrate our workflows deeply into one of their products.

None of this means Anthropic is immune to defection. As the unit economics shift, some enterprises will test the market - especially for non-critical workloads that could plausibly run on OpenAI, Gemini, or an open model via AWS Bedrock. But wholesale migration is hard: SSO, audit trails, connector integrations, and multi-year cloud-channel commitments all raise the switching cost. The more realistic pattern is silent reallocation at the margin, where low-leverage usage quietly moves elsewhere and Claude keeps the use cases where it wins. That’s not a bad outcome for Anthropic - the spend that stays is higher-margin spend.

If you’re building products on top of LLMs: The Intercom path is the smart strategic play. Start by throwing money at the solution - pay for Opus, prove the value, move fast. Then, once you’ve demonstrated what your AI features are worth, evaluate whether to migrate high-volume workloads to fine-tuned open models on your own infrastructure. Prove value first, then optimise unit economics.

The next twelve months will tell us a lot. If Anthropic’s enterprise contracts hold, if the marketplace grows, if Claude Code and Co-Work find genuine product-market fit - the platform play is working. If developers defect en masse to OpenAI or open-source alternatives, if the agent orchestration layer gets commoditised before the marketplace takes hold, if the zero-commission honeymoon ends badly - the window may already be closing.

Either way, the era of infinite subsidised compute is over. Plan accordingly.

Full disclosure: I wrote this article with a lot of help from Claude Code (research/fact-checking, formatting, argument scrutiny etc.). The irony isn’t lost on me. In a way, this also means I have skin in the game - as a power user and paying subscriber, these changes affect me directly. Make of that what you will.

Thanks to Justin Strharsky, Matt Roberts, Panos Lazaridis, and Mo Shana’a for reading drafts and sharing their comments. Any remaining errors or bad calls are mine.

The car analogy I use elsewhere in this piece to explain the API vs subscription distinction deserves some scepticism from me personally. My last serious engagement with the automobile industry was a project I did for Lamborghini during my master’s - and even then I had two mechanical engineers to tell me what I was getting wrong about the technical side.

There’s an irony worth flagging. A European organisation that adopts Mistral specifically to avoid US (and, increasingly, Chinese) models is not reducing its vendor dependence - it’s concentrating it into a smaller pool. That’s a different kind of risk from the one the sovereignty framing usually emphasises. It’s less about national security and more about anti-competitive exposure: fewer credible providers means less pricing pressure, fewer negotiating levers, and more downside if your chosen provider stumbles. Whether that’s a price worth paying depends on how regulated you are, but it’s worth naming - the “sovereign” version of lock-in is still lock-in.

A consumer-heavy product like ChatGPT can make a lot of money. Google and Facebook are the proof: massive consumer bases monetised via advertising. The user isn’t the customer - the user is the product. Whether that model translates cleanly to LLMs is a different question and I don’t have a firm view. On the one hand, users already accepted ads-for-free in YouTube, Search, and the Facebook feed, so there’s precedent. On the other, something about an explicit “assistant” serving you ads feels categorically different from an algorithmic feed that happens to include them - the trust relationship is closer, the perceived manipulation risk higher. I can imagine this going either way, and this footnote is not the place to settle it.

Some of the best analysis I've read on the market!

very insightful and enjoyable read!